The Neural Network Linear Regressor project represents a complete implementation of a deep learning framework built from fundamental principles. Rather than relying on high-level abstractions, this project constructs every component of the neural network pipeline, providing deep insight into the mathematical foundations of modern machine learning.

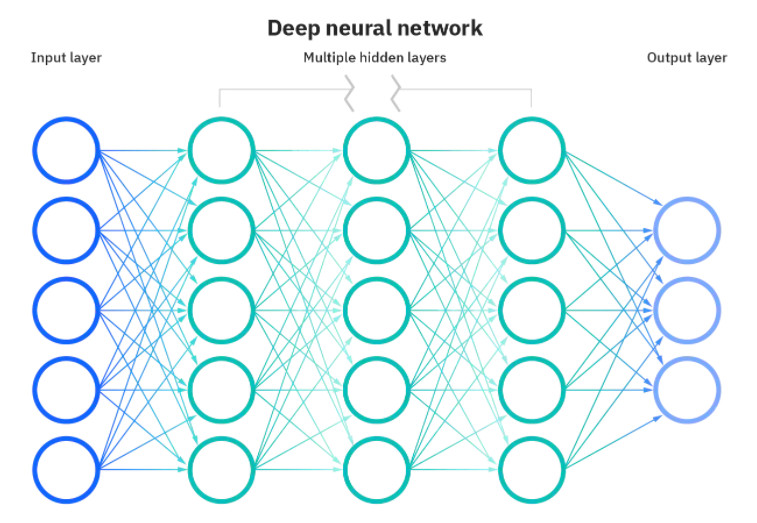

The core architecture consists of a flexible multi-layer network supporting arbitrary configurations of hidden layers and neurons. The LinearLayer class implements the fundamental building block, handling forward propagation through weighted connections and biases, backpropagation of error gradients, and parameter updates via gradient descent. Each layer maintains its own weight matrices and bias vectors, enabling modular composition into deeper architectures.

Activation functions provide the critical nonlinearity necessary for learning complex patterns. The implementation supports multiple activation types including ReLU, LeakyReLU, and ELU, each with their corresponding backward pass derivatives for proper gradient flow. Comparative analysis demonstrates how activation function choice impacts convergence speed and final model accuracy.

The training infrastructure implements sophisticated optimization beyond simple gradient descent. Support for Adam, AdaDelta, and SGD optimizers showcases understanding of adaptive learning rate methods and momentum-based techniques. Each optimizer maintains its own state for computing parameter updates, with the Adam optimizer implementing both first and second moment estimates for robust convergence.

Data preprocessing forms a critical component of the pipeline, with normalization strategies ensuring proper feature scaling. The Preprocessor class handles both forward normalization for training and inverse transformation for interpreting predictions in the original scale. This bidirectional transformation capability proves essential when working with real-world datasets containing features of vastly different magnitudes.

The housing price prediction application demonstrates practical deployment of the neural network framework. Using the California housing dataset, the system learns to predict median house values from features including location, age, and occupancy metrics. Careful feature engineering and hyperparameter tuning achieve strong predictive performance on held-out test data.

Cross-validation methodology provides robust model evaluation, partitioning data into k-folds for training and validation. This approach yields reliable performance estimates less susceptible to particular train-test splits. The implementation supports both k-fold cross-validation and traditional held-out validation sets, offering flexibility for different evaluation scenarios.

Hyperparameter search infrastructure automates the process of finding optimal network configurations. The system explores combinations of learning rates, optimizer types, hidden layer architectures, and activation functions, tracking validation performance to identify the best configuration. Visualization tools plot training and validation error curves across epochs, revealing convergence characteristics and potential overfitting.

Model persistence capabilities enable saving trained networks and loading them for future predictions, essential for deploying models in production environments. The complete pipeline from data loading through preprocessing, training, evaluation, and prediction demonstrates end-to-end machine learning system design.

This project showcases deep understanding of neural network fundamentals including backpropagation mathematics, gradient-based optimization, regularization strategies, and empirical evaluation methodologies. The ability to implement these concepts from scratch, rather than treating them as black boxes, provides the foundation for understanding and extending modern deep learning systems.